Software Architecture: Difference between revisions

No edit summary |

No edit summary |

||

| Line 48: | Line 48: | ||

* Input: Progression of time, instructions to the car | * Input: Progression of time, instructions to the car | ||

* Output: visual output for the user, screenshots for vision module, APIs to find out where stuff actually is. A warning when our car has "crashed", which means there is a bug in the vision or driving logic. | * Output: visual output for the user, screenshots for vision module, APIs to find out where stuff actually is. A warning when our car has "crashed", which means there is a bug in the vision or driving logic. | ||

== Extra Modules == | |||

=== Between C# and TORCS, the dispatcher === | |||

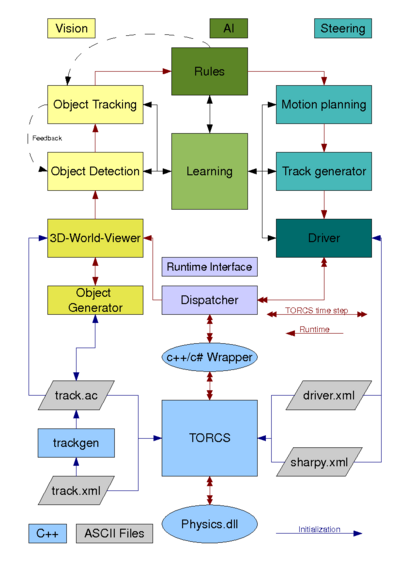

The dispatcher splits the information from TORCS and sends it to the relevant parts. | |||

The current position and rotation of our car and the opponents (including "parked cars" as obstacles) is send to the world viewer. | |||

Informations about our fuel, brake temperature etc. it sends to the AI part (rules, learning) and to the driver. The driver gets informations from the onboard sensors (engines rpm etc.). | |||

From the driver it gets back the commands to send back to TORCS. | |||

While learning, we also can send information to other parts, to get feedback about the quality of our single steps and to help to work independent. | |||

Revision as of 06:18, 6 July 2008

Codebase_Analysis

Multithreading

Architecture

Overview of modules

Driving

Driving module will use an interface like this of Torcs:

- Input: from the Vision and world simulator

- Output: high level orders to the car simulator

Driving Hard Problems (Motion Planning)

- It seems like we encode rules like: standard practice is to trail a car by 2 seconds

- And then we mention things like avoid other cars, don't make 3-G turns. The question is, how are those rules encoded?

- What about the situation where we want to make notice of things for later, like the location of potholes. Assuming the vision has decided that is a pothole, how do we add that to map, remove it when we go by because it has been fixed? In a racing game, we should make note about a jump so that we don't go off a cliff. Supposed we would just drive the car through the map to have it gather data. What is the maximum speed it could go through the map the first time?

Codebase_Analysis#Motion_Planning

http://cig.dei.polimi.it/?cat=4

http://youtube.com/watch?v=ruHzCF3CHIA

Vision

Vision module I/O:

- Input: screenshot of the world (real or virtual).

- Output: object geometry, etc. and other information necessary to the AI.

Vision Hard Problems

OCR Is Google's Tesseract [1] supersetted by Intel's OpenCV Library? (Aleks)

What is the roadmap for how we will use more and more of the Intel logic? (Aleks)

- For M1 demo, how do we focus it on only finding cars in front of it? Just getting the pipeline running will be work. We will need to configure the right brightness constants so it knows what it is looking at, for example...

- What about doing training and object detection? Their website says we can hand it lots of pictures of cars, and non-cars and it will start to figure things out. How is that data stored? Can we tweak it?

- If we know the size of the car, you could use that to estimate distance without having 3-D images to deal with. (We should sketch out 3-D on the roadmap...)

- I think we should do blob first, and then attack objects. It might be that blobs are useless, and object recognition is much better. Fine, then blobs will only be used for a few days ;-) We should be working our way up.

- Radar is another type of input to a visual system. It has mostly less data than a 3-D image, but we could still build filters which tweak it and call the Intel code in the different way to get it to make sense. For now, let's focus only on 2-d and 3-d images, and we can do radar, sonar, etc. later.

- If we have both visual and radar, how do we build a system which synthesizes the best of both?

World Simulator

World simulator I/O:

- Input: Progression of time, instructions to the car

- Output: visual output for the user, screenshots for vision module, APIs to find out where stuff actually is. A warning when our car has "crashed", which means there is a bug in the vision or driving logic.

Extra Modules

Between C# and TORCS, the dispatcher

The dispatcher splits the information from TORCS and sends it to the relevant parts. The current position and rotation of our car and the opponents (including "parked cars" as obstacles) is send to the world viewer. Informations about our fuel, brake temperature etc. it sends to the AI part (rules, learning) and to the driver. The driver gets informations from the onboard sensors (engines rpm etc.). From the driver it gets back the commands to send back to TORCS. While learning, we also can send information to other parts, to get feedback about the quality of our single steps and to help to work independent.